A hybrid automated system (HAS) aims to integrate the capabilities of artificially intelligent machines (based on computer technology) with the capacities of the people who interact with these machines in the course of their work activities. The principal concerns of HAS utilization relate to how the human and machine subsystems should be designed in order to make the best use of the knowledge and skills of both parts of the hybrid system, and how the human operators and machine components should interact with each other to assure their functions complement one another. Many hybrid automated systems have evolved as the products of applications of modern information- and control-based methodologies to automate and integrate different functions of often complex technological systems. HAS was originally identified with the introduction of computer-based systems used in the design and operation of real-time control systems for nuclear power reactors, for chemical processing plants and for discrete parts-manufacturing technology. HAS can now also be found in many service industries, such as air traffic control and aircraft navigation procedures in the civil aviation area, and in the design and use of intelligent vehicle and highway navigation systems in road transportation.

With continuing progress in computer-based automation, the nature of human tasks in modern technological systems shifts from those that require perceptual-motor skills to those calling for cognitive activities, which are needed for problem solving, for decision making in system monitoring, and for supervisory control tasks. For example, the human operators in computer-integrated manufacturing systems primarily act as system monitors, problem solvers and decision makers. The cognitive activities of the human supervisor in any HAS environment are (1) planning what should be done for a given period of time, (2) devising procedures (or steps) to achieve the set of planned goals, (3) monitoring the progress of (technological) processes, (4) “teaching” the system through a human-interactive computer, (5) intervening if the system behaves abnormally or if the control priorities change and (6) learning through feedback from the system about the impact of supervisory actions (Sheridan 1987).

Hybrid System Design

The human-machine interactions in a HAS involve utilization of dynamic communication loops between the human operators and intelligent machines—a process that includes information sensing and processing and the initiation and execution of control tasks and decision making—within a given structure of function allocation between humans and machines. At a minimum, the interactions between people and automation should reflect the high complexity of hybrid automated systems, as well as relevant characteristics of the human operators and task requirements. Therefore, the hybrid automated system can be formally defined as a quintuple in the following formula:

HAS = (T, U, C, E, I)

where T = task requirements (physical and cognitive); U = user characteristics (physical and cognitive); C = the automation characteristics (hardware and software, including computer interfaces); E = the system’s environment; I = a set of interactions among the above elements.

The set of interactions I embodies all possible interactions between T, U and C in E regardless of their nature or strength of association. For example, one of the possible interactions might involve the relation of the data stored in the computer memory to the corresponding knowledge, if any, of the human operator. The interactions I can be elemental (i.e., limited to a one-to-one association), or complex, such as would involve interactions between the human operator, the particular software used to achieve the desired task, and the available physical interface with the computer.

Designers of many hybrid automated systems focus primarily on the computer-aided integration of sophisticated machines and other equipment as parts of computer-based technology, rarely paying much attention to the paramount need for effective human integration within such systems. Therefore, at present, many of the computer-integrated (technological) systems are not fully compatible with the inherent capabilities of the human operators as expressed by the skills and knowledge necessary for the effective control and monitoring of these systems. Such incompatibility arises at all levels of human, machine and human-machine functioning, and can be defined within a framework of the individual and the entire organization or facility. For example, the problems of integrating people and technology in advanced manufacturing enterprises occur early in the HAS design stage. These problems can be conceptualized using the following system integration model of the complexity of interactions, I, between the system designers, D, human operators, H, or potential system users and technology, T:

I (H, T) = F [ I (H, D), I (D, T)]

where I stands for relevant interactions taking place in a given HAS’s structure, while F indicates functional relationships between designers, human operators and technology.

The above system integration model highlights the fact that the interactions between the users and technology are determined by the outcome of the integration of the two earlier interactions—namely, (1) those between HAS designers and potential users and (2) those between the designers and the HAS technology (at the level of machines and their integration). It should be noted that even though strong interactions typically exist between the designers and technology, only very few examples of equally strong interrelationships between designers and human operators can be found.

It can be argued that even in the most automated systems, the human role remains critical to successful system performance at the operational level. Bainbridge (1983) identified a set of problems relevant to the operation of the HAS which are due to the nature of automation itself, as follows:

- Operators “out of the control loop”. The human operators are present in the system to exercise control when needed, but by being “out of the control loop” they fail to maintain the manual skills and long-term system knowledge that are often required in case of an emergency.

- Outdated “mental picture”. The human operators may not be able to respond quickly to changes in the system behaviour if they have not been following the events of its operation very closely. Furthermore, the operators’ knowledge or mental picture of the system functioning may be inadequate to initiate or exercise required responses.

- Disappearing generations of skills. New operators may not be able to acquire sufficient knowledge about the computerized system achieved through experience and, therefore, will be unable to exercise effective control when needed.

- Authority of automatics. If the computerized system has been implemented because it can perform the required tasks better than the human operator, the question arises, “On what basis should the operator decide that correct or incorrect decisions are being made by the automated systems?”

- Emergence of the new types of “human errors” due to automation. Automated systems lead to new types of errors and, consequently, accidents which cannot be analysed within the framework of traditional techniques of analysis.

Task Allocation

One of the important issues for HAS design is to determine how many and which functions or responsibilities should be allocated to the human operators, and which and how many to the computers. Generally, there are three basic classes of task allocation problems that should be considered: (1) the human supervisor–computer task allocation, (2) the human–human task allocation and (3) the supervisory computer–computer task allocation. Ideally, the allocation decisions should be made through some structured allocation procedure before the basic system design is begun. Unfortunately such a systematic process is seldom possible, as the functions to be allocated may either need further examination or must be carried out interactively between the human and machine system components—that is, through application of the supervisory control paradigm. Task allocation in hybrid automated systems should focus on the extent of the human and computer supervisory responsibilities, and should consider the nature of interactions between the human operator and computerized decision support systems. The means of information transfer between machines and the human input-output interfaces and the compatibility of software with human cognitive problem-solving abilities should also be considered.

In traditional approaches to the design and management of hybrid automated systems, workers were considered as deterministic input-output systems, and there was a tendency to disregard the teleological nature of human behaviour—that is, the goal-oriented behaviour relying on the acquisition of relevant information and the selection of goals (Goodstein et al. 1988). To be successful, the design and management of advanced hybrid automated systems must be based on a description of the human mental functions needed for a specific task. The “cognitive engineering” approach (described further below) proposes that human-machine (hybrid) systems need to be conceived, designed, analysed and evaluated in terms of human mental processes (i.e., the operator’s mental model of the adaptive systems is taken into account). The following are the requirements of the human-centred approach to HAS design and operation as formulated by Corbett (1988):

- Compatibility. System operation should not require skills unrelated to existing skills, but should allow existing skills to evolve. The human operator should input and receive information which is compatible with conventional practice in order that the interface conform to the user’s prior knowledge and skill.

- Transparency. One cannot control a system without understanding it. Therefore, the human operator must be able to “see” the internal processes of the system’s control software if learning is to be facilitated. A transparent system makes it easy for users to build up an internal model of the decision-making and control functions that the system can perform.

- Minimum shock. The system should not do anything which operators find unexpected in the light of the information available to them, detailing the present state of the system.

- Disturbance control. Uncertain tasks (as defined by the choice structure analysis) should be under human operator control with computer decision-making support.

- Fallibility. The implicit skills and knowledge of the human operators should not be designed out of the system. The operators should never be put in a position where they helplessly watch the software direct an incorrect operation.

- Error reversibility. Software should supply sufficient feedforward of information to inform the human operator of the likely consequences of a particular operation or strategy.

- Operating flexibility. The system should offer human operators the freedom to trade off requirements and resource limits by shifting operating strategies without losing the control software support.

Cognitive Human Factors Engineering

Cognitive human factors engineering focuses on how human operators make decisions at the workplace, solve problems, formulate plans and learn new skills (Hollnagel and Woods 1983). The roles of the human operators functioning in any HAS can be classified using Rasmussen’s scheme (1983) into three major categories:

- Skill-based behaviour is the sensory-motor performance executed during acts or activities which take place without conscious control as smooth, automated and highly integrated patterns of behaviour. Human activities that fall under this category are considered to be a sequence of skilled acts composed for a given situation. Skill-based behaviour is thus the expression of more or less stored patterns of behaviours or pre-programmed instructions in a space-time domain.

- Rule-based behaviour is a goal-oriented category of performance structured by feedforward control through a stored rule or procedure—that is, an ordered performance allowing a sequence of subroutines in a familiar work situation to be composed. The rule is typically selected from previous experiences and reflects the functional properties which constrain the behaviour of the environment. Rule-based performance is based on explicit know-how as regards employing the relevant rules. The decision data set consists of references for recognition and identification of states, events or situations.

- Knowledge-based behaviour is a category of goal-controlled performance, in which the goal is explicitly formulated based on knowledge of the environment and the aims of the person. The internal structure of the system is represented by a “mental model”. This kind of behaviour allows the development and testing of different plans under unfamiliar and, therefore, uncertain control conditions, and is needed when skills or rules are either unavailable or inadequate so that problem solving and planning must be called upon instead.

In the design and management of a HAS, one should consider the cognitive characteristics of the workers in order to assure the compatibility of system operation with the worker’s internal model that describes its functions. Consequently, the system’s description level should be shifted from the skill-based to the rule-based and knowledge-based aspects of human functioning, and appropriate methods of cognitive task analysis should be used to identify the operator’s model of a system. A related issue in the development of a HAS is the design of means of information transmission between the human operator and automated system components, at both the physical and the cognitive levels. Such information transfer should be compatible with the modes of information utilized at different levels of system operation—that is, visual, verbal, tactile or hybrid. This informational compatibility ensures that different forms of information transfer will require minimal incompatibility between the medium and the nature of the information. For example, a visual display is best for transmission of spatial information, while auditory input may be used to convey textual information.

Quite often the human operator develops an internal model that describes the operation and function of the system according to his or her experience, training and instructions in connection with the given type of human-machine interface. In light of this reality, the designers of a HAS should attempt to build into the machines (or other artificial systems) a model of the human operator’s physical and cognitive characteristics—that is, the system’s image of the operator (Hollnagel and Woods 1983). The designers of a HAS must also take into consideration the level of abstraction in the system description as well as various relevant categories of the human operator’s behaviour. These levels of abstraction for modelling human functioning in the working environment are as follows (Rasmussen 1983): (1) physical form (anatomical structure), (2) physical functions (physiological functions), (3) generalized functions (psychological mechanisms and cognitive and affective processes), (4) abstract functions (information processing) and (5) functional purpose (value structures, myths, religions, human interactions). These five levels must be considered simultaneously by the designers in order to ensure effective HAS performance.

System Software Design

Since the computer software is a primary component of any HAS environment, software development, including design, testing, operation and modification, and software reliability issues must also be considered at the early stages of HAS development. By this means, one should be able to lower the cost of software error detection and elimination. It is difficult, however, to estimate the reliability of the human components of a HAS, on account of limitations in our ability to model human task performance, the related workload and potential errors. Excessive or insufficient mental workload may lead to information overload and boredom, respectively, and may result in degraded human performance, leading to errors and the increasing probability of accidents. The designers of a HAS should employ adaptive interfaces, which utilize artificial intelligence techniques, to solve these problems. In addition to human-machine compatibility, the issue of human-machine adaptability to each other must be considered in order to reduce the stress levels that come about when human capabilities may be exceeded.

Due to the high level of complexity of many hybrid automated systems, identification of any potential hazards related to the hardware, software, operational procedures and human-machine interactions of these systems becomes critical to the success of efforts aimed at reduction of injuries and equipment damage. Safety and health hazards associated with complex hybrid automated systems, such as computer-integrated manufacturing technology (CIM), is clearly one of the most critical aspects of system design and operation.

System Safety Issues

Hybrid automated environments, with their significant potential for erratic behaviour of the control software under system disturbance conditions, create a new generation of accident risks. As hybrid automated systems become more versatile and complex, system disturbances, including start-up and shut-down problems and deviations in system control, can significantly increase the possibility of serious danger to the human operators. Ironically, in many abnormal situations, operators usually rely on the proper functioning of the automated safety subsystems, a practice which may increase the risk of severe injury. For example, a study of accidents related to malfunctions of technical control systems showed that about one-third of the accident sequences included human intervention in the control loop of the disturbed system.

Since traditional safety measures cannot be easily adapted to the needs of HAS environments, injury control and accident prevention strategies need to be reconsidered in view of the inherent characteristics of these systems. For example, in the area of advanced manufacturing technology, many processes are characterized by the existence of substantial amounts of energy flows which cannot be easily anticipated by the human operators. Furthermore, safety problems typically emerge at the interfaces between subsystems, or when system disturbances progress from one subsystem to another. According to the International Organization for Standardization (ISO 1991), the risks associated with hazards due to industrial automation vary with the types of industrial machines incorporated into the specific manufacturing system and with the ways in which the system is installed, programmed, operated, maintained and repaired. For example, a comparison of robot-related accidents in Sweden to other types of accidents showed that robots may be the most hazardous industrial machines used in advanced manufacturing industry. The estimated accident rate for industrial robots was one serious accident per 45 robot-years, a higher rate than that for industrial presses, which was reported to be one accident per 50 machine-years. It should be noted here that industrial presses in the United States accounted for about 23% of all metalworking machine-related fatalities for the 1980–1985 period, with power presses ranked first with respect to the severity-frequency product for non-fatal injuries.

In the domain of advanced manufacturing technology, there are many moving parts which are hazardous to workers as they change their position in a complex manner outside the visual field of the human operators. Rapid technological developments in computer-integrated manufacturing created a critical need to study the effects of advanced manufacturing technology on the workers. In order to identify the hazards caused by various components of such a HAS environment, past accidents need to be carefully analysed. Unfortunately, accidents involving robot use are difficult to isolate from reports of human operated machine-related accidents, and, therefore, there may be a high percentage of unrecorded accidents. The occupational health and safety rules of Japan state that “industrial robots do not at present have reliable means of safety and workers cannot be protected from them unless their use is regulated”. For example, the results of the survey conducted by the Labour Ministry of Japan (Sugimoto 1987) of accidents related to industrial robots across the 190 factories surveyed (with 4,341 working robots) showed that there were 300 robot-related disturbances, of which 37 cases of unsafe acts resulted in some near accidents, 9 were injury-producing accidents, and 2 were fatal accidents. The results of other studies indicate that computer-based automation does not necessarily increase the overall level of safety, as the system hardware cannot be made fail-safe by safety functions in the computer software alone, and system controllers are not always highly reliable. Furthermore, in a complex HAS, one cannot depend exclusively on safety-sensing devices to detect hazardous conditions and undertake appropriate hazard-avoidance strategies.

Effects of Automation on Human Health

As discussed above, worker activities in many HAS environments are basically those of supervisory control, monitoring, system support and maintenance. These activities may also be classified into four basic groups as follows: (1) programming tasks i.e., encoding the information that guides and directs machinery operation, (2) monitoring of HAS production and control components, (3) maintenance of HAS components to prevent or alleviate machinery malfunctions, and (4) performing a variety of support tasks, etc. Many recent reviews of the impact of the HAS on worker well-being concluded that although the utilization of a HAS in the manufacturing area may eliminate heavy and dangerous tasks, working in a HAS environment may be dissatisfying and stressful for the workers. Sources of stress included the constant monitoring required in many HAS applications, the limited scope of the allocated activities, the low level of worker interaction permitted by the system design, and safety hazards associated with the unpredictable and uncontrollable nature of the equipment. Even though some workers who are involved in programming and maintenance activities feel the elements of challenge, which may have positive effects on their well-being, these effects are often offset by the complex and demanding nature of these activities, as well as by the pressure exerted by management to complete these activities quickly.

Although in some HAS environments the human operators are removed from traditional energy sources (the flow of work and movement of the machine) during normal operating conditions, many tasks in automated systems still need to be carried out in direct contact with other energy sources. Since the number of different HAS components is continually increasing, special emphasis must be placed on workers’ comfort and safety and on the development of effective injury control provisions, especially in view of the fact that the workers are no longer able to keep up with the sophistication and complexity of such systems.

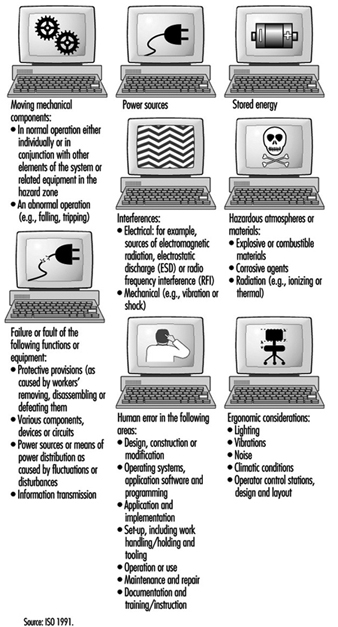

In order to meet the current needs for injury control and worker safety in computer integrated manufacturing systems, the ISO Committee on Industrial Automation Systems has proposed a new safety standard entitled “Safety of Integrated Manufacturing Systems” (1991). This new international standard, which was developed in recognition of the particular hazards which exist in integrated manufacturing systems incorporating industrial machines and associated equipment, aims to minimize the possibilities of injuries to personnel while working on or adjacent to an integrated manufacturing system. The main sources of potential hazards to the human operators in CIM identified by this standard are shown in figure 1.

Figure 1. Main source of hazards in computer-intergrated manufacturing (CIM) (after ISO 1991)

Human and System Errors

In general, hazards in a HAS can arise from the system itself, from its association with other equipment present in the physical environment, or from interactions of human personnel with the system. An accident is only one of the several outcomes of human-machine interactions that may emerge under hazardous conditions; near accidents and damage incidents are much more common (Zimolong and Duda 1992). The occurrence of an error can lead to one of these consequences: (1) the error remains unnoticed, (2) the system can compensate for the error, (3) the error leads to a machine breakdown and/or system stoppage or (4) the error leads to an accident.

Since not every human error that results in a critical incident will cause an actual accident, it is appropriate to distinguish further among outcome categories as follows: (1) an unsafe incident (i.e., any unintentional occurrence regardless whether it results in injury, damage or loss), (2) an accident (i.e., an unsafe event resulting in injury, damage or loss), (3) a damage incident (i.e., an unsafe event which results only in some kind of material damage), (4) a near accident or “near miss” (i.e., an unsafe event in which injury, damage or loss was fortuitously avoided by a narrow margin) and (5) the existence of accident potential (i.e., unsafe events which could have resulted in injury, damage, or loss, but, owing to circumstances, did not result in even a near accident).

One can distinguish three basic types of human error in a HAS:

- skill-based slips and lapses

- rule-based mistakes

- knowledge-based mistakes.

This taxonomy, devised by Reason (1990), is based on a modification of Rasmussen’s skill-rule-knowledge classification of human performance as described above. At the skill-based level, human performance is governed by stored patterns of pre-programmed instructions represented as analogue structures in a space-time domain. The rule-based level is applicable to tackling familiar problems in which solutions are governed by stored rules (called “productions”, since they are accessed, or produced, at need). These rules require certain diagnoses (or judgements) to be made, or certain remedial actions to be taken, given that certain conditions have arisen that demand an appropriate response. At this level, human errors are typically associated with the misclassification of situations, leading either to the application of the wrong rule or to the incorrect recall of consequent judgements or procedures. Knowledge-based errors occur in novel situations for which actions must be planned “on-line” (at a given moment), using conscious analytical processes and stored knowledge. Errors at this level arise from resource limitations and incomplete or incorrect knowledge.

The generic error-modelling systems (GEMS) proposed by Reason (1990), which attempts to locate the origins of the basic human error types, can be used to derive the overall taxonomy of human behaviour in a HAS. GEMS seeks to integrate two distinct areas of error research: (1) slips and lapses, in which actions deviate from current intention due to execution failures and/or storage failures and (2) mistakes, in which the actions may run according to plan, but the plan is inadequate to achieve its desired outcome.

Risk Assessment and Prevention in CIM

According to the ISO (1991), risk assessment in CIM should be performed so as to minimize all risks and to serve as a basis for determining safety objectives and measures in the development of programmes or plans both to create a safe working environment and to ensure the safety and health of personnel as well. For example, work hazards in manufacturing-based HAS environments can be characterized as follows: (1) the human operator may need to enter the danger zone during disturbance recovery, service and maintenance tasks, (2) the danger zone is difficult to determine, to perceive and to control, (3) the work may be monotonous and (4) the accidents occurring within computer-integrated manufacturing systems are often serious. Each identified hazard should be assessed for its risk, and appropriate safety measures should be determined and implemented to minimize that risk. Hazards should also be ascertained with respect to all of the following aspects of any given process: the single unit itself; the interaction between single units; the operating sections of the system; and the operation of the complete system for all intended operating modes and conditions, including conditions under which normal safeguarding means are suspended for such operations as programming, verification, troubleshooting, maintenance or repair.

The design phase of the ISO (1991) safety strategy for CIM includes:

- specification of the limits of system parameters

- application of a safety strategy

- identification of hazards

- assessment of the associated risks

- removal of the hazards or diminution of the risks as much as practicable.

The system safety specification should include:

- a description of system functions

- a system layout and/or model

- the results of a survey undertaken to investigate the interaction of different working processes and manual activities

- an analysis of process sequences, including manual interaction

- a description of the interfaces with conveyor or transport lines

- process flow charts

- foundation plans

- plans for supply and disposal devices

- determination of the space required for supply and disposal of material

- available accident records.

In accordance with the ISO (1991), all necessary requirements for ensuring a safe CIM system operation need to be considered in the design of systematic safety-planning procedures. This includes all protective measures to effectively reduce hazards and requires:

- integration of the human-machine interface

- early definition of the position of those working on the system (in time and space)

- early consideration of ways of cutting down on isolated work

- consideration of environmental aspects.

The safety planning procedure should address, among others, the following safety issues of CIM:

- Selection of the operating modes of the system. The control equipment should have provisions for at least the following operating modes:(1) normal or production mode (i.e., with all normal safeguards connected and operating), (2) operation with some of the normal safeguards suspended and (3) operation in which system or remote manual initiation of hazardous situations is prevented (e.g., in the case of local operation or of isolation of power to or mechanical blockage of hazardous conditions).

- Training, installation, commissioning and functional testing. When personnel are required to be in the hazard zone, the following safety measures should be provided in the control system: (1) hold to run, (2) enabling device, (3) reduced speed, (4) reduced power and (5) moveable emergency stop.

- Safety in system programming, maintenance and repair. During programming, only the programmer should be allowed in the safeguarded space. The system should have inspection and maintenance procedures in place to ensure continued intended operation of the system. The inspection and maintenance programme should take into account the recommendations of the system supplier and those of suppliers of various elements of the systems. It scarcely needs mentioning that personnel who perform maintenance or repairs on the system should be trained in the procedures necessary to perform the required tasks.

- Fault elimination. Where fault elimination is necessary from inside the safeguarded space, it should be performed after safe disconnection (or, if possible, after a lockout mechanism has been actuated). Additional measures against erroneous initiation of hazardous situations should be taken. Where hazards can occur during fault elimination at sections of the system or at the machines of adjoining systems or machines, these should also be taken out of operation and protected against unexpected starting. By means of instruction and warning signs, attention should be drawn to fault elimination in system components which cannot be observed completely.

System Disturbance Control

In many HAS installations utilized in the computer-integrated manufacturing area, human operators are typically needed for the purpose of controlling, programming, maintaining, pre-setting, servicing or troubleshooting tasks. Disturbances in the system lead to situations that make it necessary for workers to enter the hazardous areas. In this respect, it can be assumed that disturbances remain the most important reason for human interference in CIM, because the systems will more often than not be programmed from outside the restricted areas. One of the most important issues for CIM safety is to prevent disturbances, since most risks occur in the troubleshooting phase of the system. The avoidance of disturbances is the common aim as regards both safety and cost-effectiveness.

A disturbance in a CIM system is a state or function of a system that deviates from the planned or desired state. In addition to productivity, disturbances during the operation of a CIM have a direct effect on the safety of the people involved in operating the system. A Finnish study (Kuivanen 1990) showed that about one-half of the disturbances in automated manufacturing decrease the safety of the workers. The main causes for disturbances were errors in system design (34%), system component failures (31%), human error (20%) and external factors (15%). Most machine failures were caused by the control system, and, in the control system, most failures occurred in sensors. An effective way to increase the level of safety of CIM installations is to reduce the number of disturbances. Although human actions in disturbed systems prevent the occurrence of accidents in the HAS environment, they also contribute to them. For example, a study of accidents related to malfunctions of technical control systems showed that about one-third of the accident sequences included human intervention in the control loop of the disturbed system.

The main research issues in CIM disturbance prevention concern (1) major causes of disturbances, (2) unreliable components and functions, (3) the impact of disturbances on safety, (4) the impact of disturbances on the function of the system, (5) material damage and (6) repairs. The safety of HAS should be planned early at the system design stage, with due consideration of technology, people and organization, and be an integral part of the overall HAS technical planning process.

HAS Design: Future Challenges

To assure the fullest benefit of hybrid automated systems as discussed above, a much broader vision of system development, one which is based on integration of people, organization and technology, is needed. Three main types of system integration should be applied here:

- integration of people, by assuring effective communication between them

- human-computer integration, by designing suitable interfaces and interaction between people and computers

- technological integration, by assuring effective interfacing and interactions between machines.

The minimum design requirements for hybrid automated systems should include the following: (1) flexibility, (2) dynamic adaptation, (3) improved responsiveness, and (4) the need to motivate people and make better use of their skills, judgement and experience. The above also requires that HAS organizational structures, work practices and technologies be developed to allow people at all levels of the system to adapt their work strategies to the variety of systems control situations. Therefore, the organizations, work practices and technologies of HAS will have to be designed and developed as open systems (Kidd 1994).

An open hybrid automated system (OHAS) is a system that receives inputs from and sends outputs to its environment. The idea of an open system can be applied not only to system architectures and organizational structures, but also to work practices, human-computer interfaces, and the relationship between people and technologies: one may mention, for example, scheduling systems, control systems and decision support systems. An open system is also an adaptive one when it allows people a large degree of freedom to define the mode of operating the system. For example, in the area of advanced manufacturing, the requirements of an open hybrid automated system can be realized through the concept of human and computer-integrated manufacturing (HCIM). In this view, the design of technology should address the overall HCIM system architecture, including the following: (1) considerations of the network of groups, (2) the structure of each group, (3) the interaction between groups, (4) the nature of the supporting software and (5) technical communication and integration needs between supporting software modules.

The adaptive hybrid automated system, as opposed to the closed system, does not restrict what the human operators can do. The role of the designer of a HAS is to create a system that will satisfy the user’s personal preferences and allow its users to work in a way that they find most appropriate. A prerequisite for permitting user input is the development of an adaptive design methodology—that is, an OHAS that allows enabling, computer-supported technology for its implementation in the design process. The need to develop a methodology for adaptive design is one of the immediate requirements to realize the OHAS concept in practice. A new level of adaptive human supervisory control technology needs also to be developed. Such technology should allow the human operator to “see through” the otherwise invisible control system of HAS functioning—for example, by application of an interactive, high-speed video system at each point of system control and operation. Finally, a methodology for development of an intelligent and highly adaptive, computer-based support of human roles and human functioning in the hybrid automated systems is also very much needed.